Sponsorship

Mojeek

Mojeek is on a mission to build the world's alternative search engine; a search engine that does what's right, that values and respects your privacy, whilst providing its own unique and unbiased search results from its own independently-crawled index.

Search is often considered the gateway to the Web, which is why we believe it's critical that choices exist, choices that take a different approach. By putting the people who use Mojeek first and using technology built from the ground-up, we're here to offer that choice.

Welcome to the 41 new Consolites who joined last week! 👋

Not subscribed to Console? Subscribe now to get a list of new open-source projects curated by an Amazon engineer in your email every week.

Already subscribed? Why not spread the word by forwarding Console to the best engineer you know?

The Console Career Service

Want to make more money for your work? Let us find you a new, higher-paying job for free! Sign up for The Console Career Service today! The benefits of signing up include:

Automatic first-round interviews

One application, many jobs (1:N matching)

Free candidate preparation service

New opportunities updated regularly

All roles, from PM to SWE to BizOps

High potential, venture-backed, and open-source opportunities

Even if you’re not actively looking, why not let us see what’s out there for you?

Ready to sign up? Click below and sign up for free in less than 5 minutes👇

Projects

Hub

Hub is a dataset format for AI. Easily build and manage datasets for machine and deep learning. Stream data real-time & version-control it. Datasets in Hub format get instantly visualized via app.activeloop.ai (seen in the gif above).

language: Python, stars: 3669, watchers: 54, forks: 304, issues: 62

last commit: November 17, 2021, first commit: August 09, 2019

social: https://www.activeloop.ai/

repo: https://github.com/activeloopai/Hub

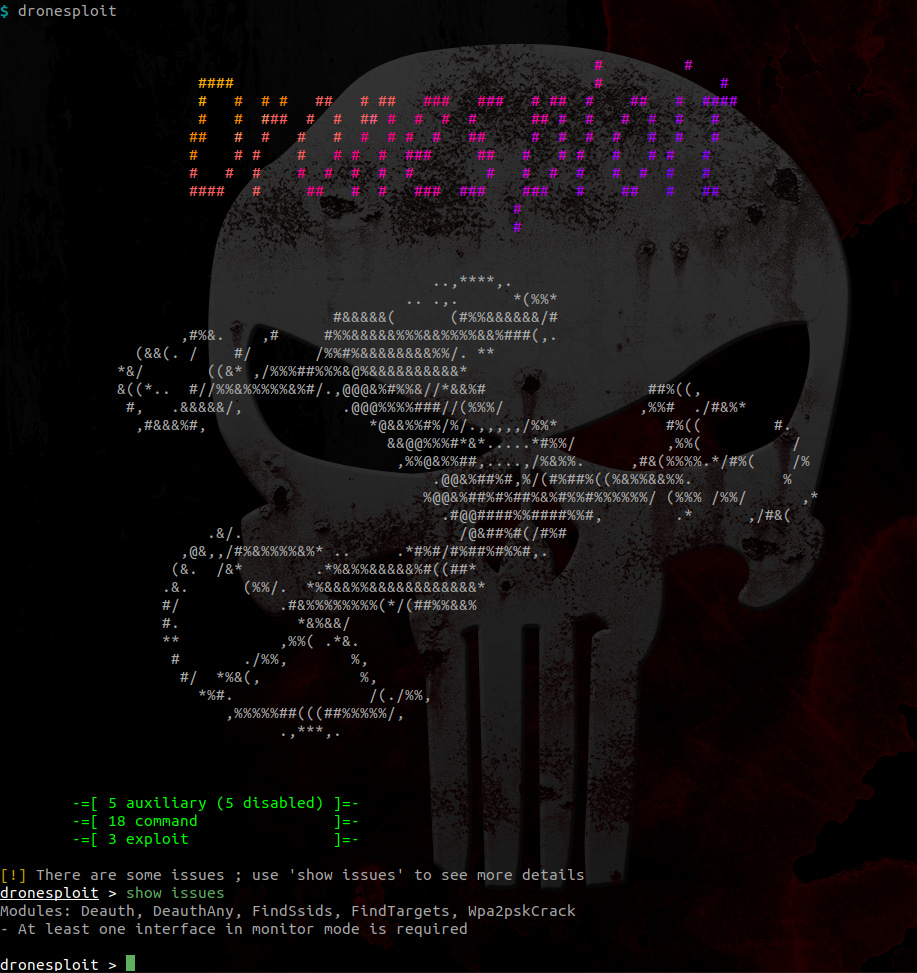

dronesploit

This CLI framework is based on sploitkit and is an attempt to gather hacking techniques and exploits especially focused on drone hacking. For ease of use, the interface has a layout that looks like Metasploit.

language: Python, stars: 855, watchers: 32, forks: 150, issues: 0

last commit: November 05, 2021, first commit: September 08, 2019

social: https://twitter.com/alex_dhondt

repo: https://github.com/dhondta/dronesploit

create-rust-app

Set up a modern rust+react web app by running one command.

language: Rust, stars: 172, watchers: 2, forks: 10, issues: 1

last commit: November 17, 2021, first commit: May 08, 2021

repo: https://github.com/Wulf/create-rust-app

Console is powered by donations. We use your donations to grow the newsletter readership via advertisement. If you’d like to see the newsletter reach more people, or would just like to show your appreciation for the projects featured in the newsletter, please consider a donation 😊

An Interview With Davit of Activeloop

Hey Davit! Thanks for joining us! Let’s start with your background. Where have you worked in the past and where are you from?

I'm originally from Armenia. I used to be a Ph.D. candidate at the Princeton Neuroscience Lab. We worked on reconstructing the connectome (or the connections of neurons) inside of a mouse brain. I faced lots of challenges while working with petabyte-scale computer vision data, and that's why I started Activeloop, the company behind the open-source dataset format for AI called Hub (more on this later in the newsletter).

What’s your most controversial programming opinion?

There should be a database for images. For now, most people (even on Quora!) think we should resort to old methods such as file systems to be working with large unstructured data (such as images, videos, audio, etc.). I respectfully disagree.

Who or what are your biggest influences as a developer?

From a research perspective, Deep Learning pioneers G. Hinton, Y. LeCun, and Y. Bengio, and from a startup perspective - Paul Graham.

What is your favorite software tool?

Can I say Hub? :)

Deep learning frameworks such as PyTorch/TensorFlow.

Finally, Docker. Running a simulation (container) inside a simulation (VM) in real-world scenarios. (bare metal)

If you could dictate that everyone in the world should read one book, what would it be?

Principles by Ray Dalio.

If you had to suggest 1 person developers should follow, who would it be?

If you could teach every 12 year old in the world one thing, what would it be and why?

How to train d̶r̶a̶g̶o̶n̶s̶ models.

To train a model, you need to specify the architecture, the data, and the loss function. If the loss function is wrongly set, then the model tricks the intended behavior by the data scientist. It is very similar to how you set goals for yourself or others. You have to be very careful how to measure things objectively and how tricking the game doesn’t benefit the model (or you).

If I gave you $10 million to invest in one thing right now, where would you put it?

We've just raised a seed fund to build a database for AI, but I definitely would not mind an additional $10 million for all the great things we envision. Other than that, I'd invest in universal basic income research and how to make it sustainable for everyone.

What have you been listening to lately?

Hanz Zimmer soundtracks and A16Z infra podcasts.

How do you separate good project ideas from bad ones?

Three dimensions: Does this make you and others excited? Does it enable you to be the absolute best in something (or does it outperform all other ideas in the space)? How much value does it create?

Where did the name for Hub come from?

Based on the number of tools called Hub, I guess it's top of mind for all developers. We picked the name Hub because I had a vision of a tool that makes all the machine learning workflows easy and contains vast amounts of computer vision (and not only) datasets that a user could access instantly. A machine learning hub, if you will.

Why was Hub started? Who, or what was the biggest inspiration for Hub?

At Princeton, I seriously struggled when I worked with data. It took me weeks to download the public datasets like ImageNet to start working with them. I found out the hard way that the current solutions for storing the data we create, meaning databases, data lakes, and data warehouses, are completely unsuitable for storing computer vision data. There are numerous file formats, compression techniques, and multiple locations to take care of. As the dataset grows, all this becomes highly cumbersome and time-consuming to manage, and the data often ends up in silos. *cue "but there must be a better way!"* That is why I started Hub, which grew into Activeloop.

Are there any overarching goals of Hub that drive design or implementation?

Minimizing the number of intermediaries between storage and compute to stream datasets to Deep Learning models.

If so, what trade-offs have been made in Hub as a consequence of these goals?

Designing a database or even a format lies in navigating through several very complex and high-dimensional tradeoffs given the specific use case you are tackling.

At some point, it felt like a whack a mole game where no optimal solution existed, and we had to make tradeoffs.

One of such choices is determining how to chunk the datasets. Should you go row-wise or column-wise. A basic question that spawns widely adopted databases and data warehouses. However, when you work with unstructured data that has varying shapes across some of the dimensions, this gets even trickier. You are left on a continuum with one end of absolutely auto-indexed array storage and the opposite end represented by the blob storage, including the file system. We have tried experimenting with everything on the spectre and still think the solutions are not satisfactory to the constraints data scientists have. In any case, you default to the CAP theorem or some alternative form of it.

What is the most challenging problem that’s been solved in Hub, so far (code links to any particularly interesting sections are welcomed)?

Enabling users to access ImageNet in seconds and then connecting it effortlessly to SageMaker with high GPU utilization. I was really proud of our team for achieving that result. I can't share that code, but uploading Places365 (a 1.8M image dataset) in under 50 minutes comes really close.

Are there any competitors or projects similar to Hub? If so, what were they lacking that made you consider building something new?

I'd say our biggest competitors right now are legacy data formats, like HDF5 (and the Snowflakes of the world). Convincing people to adopt a new format is not easy. However, as the design patterns for ML pipelines evolve, we will need to shift to a next-gen way of working with (petabyte-scale) data, to train arbitrarily large models on arbitrarily large datasets in the cloud. HDF5 (and similar formats) are not fit to enable the next-gen machine learning stack (for a couple of reasons). That's where Hub comes into play.

What was the most surprising thing you learned while working on Hub?

You can stream data over the network as fast as you read from the disk.

What is your typical approach to debugging issues filed in the Hub repo?

Unlike others, I hate PDB; I prefer recreating the minimal reproducible script and finding the root cause by rapid development iterations cycles (e.g., applying binary search tree on the problem to identify the bug).

What is the release process like for Hub?

We have automatic CI/CD, which runs unit/integration tests, backward compatibility tests since it’s a format, and benchmarks against previous versions on every release candidate. Then, in any case, we manually test the code to make sure new features work as expected, then push the release.

Is Hub intended to eventually be monetized if it isn’t monetized already?

Hub is free and will always be free. We also offer any researcher / professional / citizen data scientist free storage of up to 300 GB. The dataset format for AI is free, but we're building the database for AI, with visualization, version control, and querying capabilities (the gif below shows how you can visualize the entire ImageNet in seconds in our visualization app).

Hub is an enabler for our database for AI, and the UI will help teams understand and explore their datasets, collaborate on, and stream datasets to their favorite ML tools, as well as fully utilize their GPUs. This is how we're planning to monetize the project.

What is the best way for a new developer to contribute to Hub?

Join our Slack Community, say hi (we're a fun bunch of people). Pick a good first issue, and follow our contribution guidelines for a more streamlined approach.

If you plan to continue developing Hub, where do you see the project heading next?

Applying machine learning on the storage layer itself.

What motivates you to continue contributing to Hub?

Inefficiencies data scientists face every day while operating on top of data. As a data scientist myself, it was (and still is) a problem I face daily, and I won't stop until I fully fix the problem. :)

Do you have any other project ideas that you haven’t started?

In another life, I'm starting my own Pixar and producing thought-provoking, inclusive animated movies that inspire curiosity in people of all ages.

Where do you see software development heading next?

Online differential programming - Basically, end-to-end systems which are learnable while in production (Software 2.0 coined by Andrej Karpathy). Deep Neural Networks is just one subset of it. A great example is JAX. When it comes to ML specifically, I think data-centric AI will be a prominent direction for a while. In almost every decade, we witness a new epoch in the field of machine learning. The preceding ten years have been about improving machine learning models and making them accessible to regular data scientists and developers. The next decade will be about datasets (more on this here).

Where do you see open-source heading next?

I love open-source because it allows people to build or help the software they'd love to use. This decade saw the establishment of open-source software within enterprises, startups, and universities alike (let's hope the recent news around prophet and Zillow won't impact it as much). The next decade of open source lies in the interoperability of projects and adding incentive mechanisms for developers (possibly through crypto?). Hopefully, Hub will help unify the ML stack through a unified dataset format for AI.

Do you have any suggestions for someone trying to make their first contribution to an open-source project?

Just… do it. A lot of us don't know where to start. Discover new projects by enrolling in Hacktoberfest or enrolling in Google Summer of Code. If you're slightly older, pick a project/tool that you used or one that excites you. Community is super important for a first-timer’s experience, so seek out projects that care about their contributors, are welcoming, and guide you when needed.