Console 40

1 second, torrent-net, and Devilbox

Sponsorships

The Daily Upside

The Daily Upside is a business newsletter that covers the most important stories in business in a style that’s engaging, insightful, and fun. Written by a former investment banker, The Daily Upside delivers quality insights and surfaces unique stories you won’t read elsewhere.

It’s completely free, and you are guaranteed to learn something new every day.

devilbox

Devilbox is a modern Docker LAMP stack and MEAN stack for local development.

language: PHP, stars: 3278, watchers: 110, forks: 436, issues: 43

last commit: December 21, 2020, first commit: October 09, 2016

https://twitter.com/everythingcli

github1s

github1s opens a GitHub repo in VS Code in 1 second. Go to https://github1s.com/microsoft/vscode to try an example out.

language: TypeScript, stars: 10571, watchers: 66, forks: 241, issues: 37

last commit: February 13, 2021, first commit: June 16, 2019

torrent-net

torrent-net was a distributed search engine using BitTorrent and SQLite.

language: C, stars: 845, watchers: 27, forks: 35, issues: 3

last commit: April 24, 2017, first commit: April 14, 2017

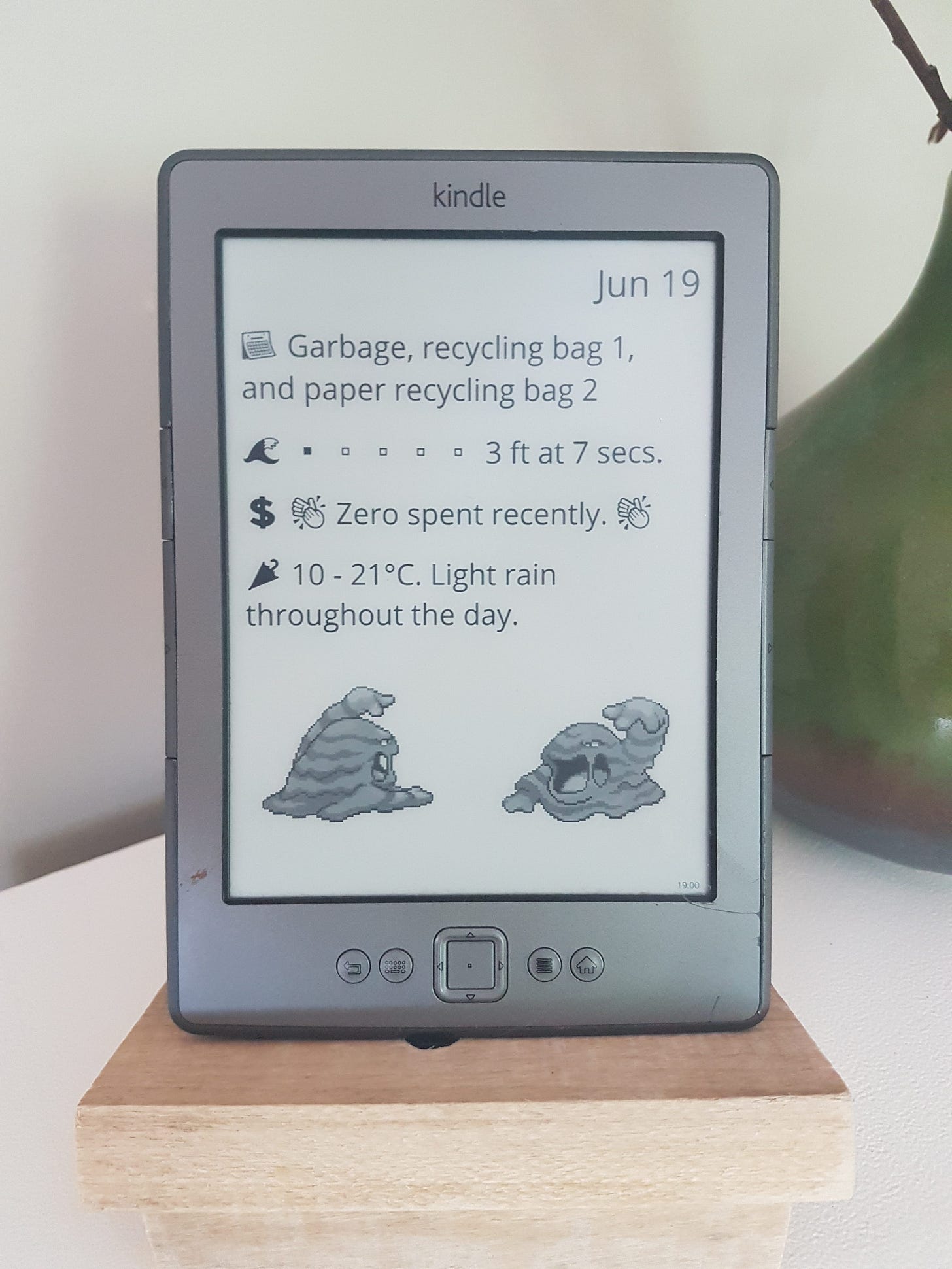

life-dashboard

life-dashboard is a low power, heads up display for every day life running on a Kindle.

language: Rust, stars: 672, watchers: 12, forks: 16, issues: 6

last commit: October 10, 2020, first commit: August 24, 2018

https://www.instagram.com/davidhampgonsalves/

Help Wanted

If you’re interested in posting a help wanted ad for your project to thousands of open-source developers, send an email to console.substack@gmail.com

An Interview With Cytopia of Devilbox

What is your background?

The very first touch points I had with code actually came from gaming. Back in those days games were shipped on CDs and in order to play them, you had to have the CD inserted in the drive at all times, even if the game was fully installed. If you lent CDs to your friends and still wanted to play them, you somehow had to trick the games. This was usually done by opening the exe file with a disassembler, finding the error message and changing the “jump if equal” (JE) command into a ”jump if not equal” command (JNE) - or vice versa. This made the game start even if the CD was not inserted. This magic had a big impact on me. Being able to manipulate the computer to your liking by simply knowing how stuff works was a big deal.

From there I found my way into simple 2D game development: C++ on Visual Studio 6.0 and DirectDraw. I had read all the “learn C++ in 24 hours/days” tutorials and I was hyped. I continued my way to 3D games with OpenGL and eventually ended up with C and nasty pointers.

At some point I was introduced to web pages and found selfhtml.org - the largest resource during that time. Then everything continued naturally into backend development and I landed my first freelance jobs aside school. This path had been stable for a couple of years and I went on learning about Linux systems and servers - loved it.

I was really enjoying programming and also server administration, but during that time, jobs only allowed you to do either one of them and I felt very unsatisfied as both disciplines had become a great passion. I was switching back and forth between them until finally DevOps hit the market. This could not have been any better, I was finally able to combine both disciplines on a professional level.

Currently, I have a very similar feeling with DevOps and the area of security, which I’ve been digging into for a couple of years now. But, it turns out the industry is merciful and there is a thing called DevSecOps as well.

What's an opinion you have that most people don't agree with?

I myself am a big fan of automated tests and I implement them like crazy. Most people I know want their tests to finish as quickly as possible (<2min) and optimize them for time. Which, of course, is valid, especially in short living deployments, such as on Kubernetes, where you want your service fix to go live right away. For me, time of a test is not a meaningful metric - at least not for the kinds of projects I am working on at GitHub. I’d rather have thorough tests, even if they take many hours. This is also the part that eats up most of my time on the projects and, in fact, usually the tests take up the majority of code in the repository. I just want to be able to release with confidence and provide stable software (as stable as it can get, of course).

Devilbox for instance, runs more than 24 hours on Travis CI for each pull request (the actual run time is around 9 hours) and it is running all of the tests against most combinations of available services and their respective versions. Since the introduction of “credits” on Travis I usually already run out of them at the very beginning of each month, so I had to port many CI tests to GitHub Actions.

CI is also some kind of playground to me. From time to time I take a couple of weekends to try out all kinds of ideas while neglecting the actual development. github.com/devilbox/vhost-gen for instance, which is a cli tool to generate virtual host templates for Apache or Nginx, is just fuzzing 15 minutes for each Python version during a test. It is basically throwing all kinds of random arguments to the tool for that duration and tries to produce an error (github.com/devilbox/vhost-gen/blob/master/.ci/fuzzy.sh). I was quite surprised how many times my tests have failed and how error prone my code actually was. This method has really helped to make it rock-solid.

The downside of all this, of course, is that I also must maintain all the tests and they do eventually break over time.

That fuzzing is interesting, do you have any failed test case examples?

What is one app on your phone that you can’t live without that you think others should know about?

I don’t have any particular killer apps, as I don’t use my phone too frequently - it’s pretty old and is mainly used for some mobility apps (car / bicycle sharing), hangboard training, alarm to get me going in the morning and pagerduty to keep me awake at night. I’m also still a fan of good old sms from time to time.

Hangboard? You must be a rock climber?

Not rock climbing, but just bouldering. I started a year ago and do it for fun. I like it a lot and the training/bouldering is very holistic, demands all kinds of muscles, core-strength, flexibility and most of all creativity :-)

If you could dictate that everyone in the world should read one book, what would it be?

Clean Code: A Handbook of Agile Software Craftsmanship - by Uncle Bob

If I gave you $10 million to invest in one thing right now, where would you put it?

I have a very strong “Enter” keypress and my Lenovo keyboard breaks about once a year and needs to be replaced (one can verify this by reading the comments here: youtube.com/watch?v=lN10hgl_Ts8). With that money at hand, I would ask Lenovo to design a stronger keyboard.

As I don’t have that money: In case someone from Lenovo is reading this, please make it more stable:

What are you currently learning?

As I am currently working in the field of DevOps/SRE I am mostly focusing on AWS, Terraform, Kubernetes and all kinds of automation around this area. Aside from that I’m catching up a lot with security, particularly with web application security and the whole ecosystem of tools. If I happen to not find a particular tool that fulfills my need, I usually dive all in and write it myself (mostly with Python). For instance I’ve written pwncat, because netcat was lacking some functionality I wanted for various hacking challenges: pwncat.org/ Incidentally this then turned into a full-blown project of its own. The most important things I took out of it were: threading/locking, networking, unix shell deep-dive and of course a lot of new Python skills.

What resources do you use to stay up to date on software engineering?

I usually stay sharp on reddit, twitter, hacker news and slashdot as well as a few slack/discord channels regarding DevOps, Python and security.

How do you separate good project ideas from bad ones?

I just don’t. I have so many ideas of things I could write and my computer is stuffed endlessly with unfinished projects. It is not necessarily a time waster to me, as I do learn a lot along the way, even if that project will never go live or is never used again. This is basically a method of teaching myself new stuff, which might not be the best, but somehow it does work for me.

Why was Devilbox started?

A few years back, I was working for an agency and had to work on a broad variety of different PHP projects every day. Some were just about quick and minor fixes and others were about adding full-blown features. As we were also hosting most of the projects ourselves, but had specific customer requirements (e.g.: apache vs nginx) or even totally different PHP major versions (legacy projects vs. greenfield) I was simply trying to make my everyday life easier by somehow automating this. By that time, Docker was gaining popularity and I saw the opportunity to combine learning this technology and solving my massive configuration issue locally. That’s when the very first basic version of Devilbox was born.

Oct 9th, 2016: github.com/cytopia/devilbox/commit/be1620e077b2edc087fbfc16a20ad4435bd25b67

Are there any overarching goals of Devilbox that drive design or implementation?

With Devilbox I’ve always tried to integrate as many different versions as possible, even older ones to achieve backwards compatibility with ancient projects. Over the years it went down as far as PHP 5.2.

One day however, I’ve received a feature request for PHP 1: github.com/cytopia/devilbox/issues/564

As stupid as this issue might have been, I wanted to see if it is possible. It took a whole weekend, but it was indeed possible and I’ve dockerized PHP 1: github.com/devilbox/docker-php-1.0

This version is somehow totally different from all PHP versions and after a little digging, the most near-PHP version I could find was PHP 1.99, which I’ve then also dockerized: github.com/devilbox/docker-php-1.99s

The latter is fully working, but neither of these two projects made it into the official Devilbox project. The initial issue however is still pinned to remind me of this fun challenge.

What is the most challenging problem that’s been solved in Devilbox, so far?

The most challenging part I was facing, once I initially started Devilbox, was file and directory permission synchronization between containers and the host system.

Usually services, such as Nginx or Apache run their master process as root and their child processes as some user with whatever uid/gid (example: 100:100).Now let’s imagine your local project directory on your host system is mounted into the web server docker container and you do a file upload. The webserver will store the uploaded file with the owner/group it is running, which is most likely different to your local uid/gid. If you want to remove the file locally, you will get a permission denied error and thus forcing you to use sudo or root.

One possibility to fix this is to ensure the web server child processes run with the same uid/gid as your local host user/group. The way to go during that time was to specify the uid/gid of the web server within the Dockerfile. So, in order to set this to your local uid/gid, you had to adjust the Dockerfile (or use build args) and rebuild it to match your local setup.

This however is not very practical as I wanted to ship pre-build docker images that everybody could use without rebuilding them. To do so, it had to be achieved during Docker run-time and I was creating a rather complex entrypoint script for the web server and php images that made this dynamic.

Under the hood most Devilbox images take env variables for uid and gid and will re-create the user with these attributes. Additionally a lot of already existing files and directories within the container need to be adjusted to not lock out the services itself.

For the PHP images the entrypoint script dealing with this situation can be seen here: github.com/devilbox/docker-php-fpm/blob/master/Dockerfiles/base/data/docker-entrypoint.d/101-uid-gid.sh

Another example for the nginx image is here: github.com/devilbox/docker-nginx-mainline/blob/master/data/docker-entrypoint.d/01-uid-gid.sh

At first this seemed easier than expected, but I was facing many errors and a lot of edge cases reported by various users. I was quite busy fixing numerous reported issues on this feature. So, to get this as stable as possible I’ve created regression tests for each and every one of the reported issues and let them run during each PR. After some time, the feature has become stable and I can now change or refactor the code with confidence.

If you want to read more about the concept of synchronizing permissions in general, I’ve documented this in more detail here:

github.com/devilbox/docker-php-fpm#unsynchronized-permissions

What is your typical approach to debugging issues filed in the Devilbox repo?

If this is about debugging issues and Linux, it is very straightforward. I have endless CI checks that will give me a first hint in case something fails and I’m also able to replicate it locally 1:1, as all checks are dockerized to ensure what I do locally is done on CI in the exact same way.

When it comes to Windows and MacOS, it is more complicated as I don’t have those systems or licenses at hand and rely on other people running Devilbox on those.

What is the release process like for Devilbox?

I’m a big fan of semver and stick to it as close as it gets. Most of the time I release bug fixes when any of the CI pipelines fail - and they fail a lot for various projects (see the Devilbox organization for involved projects: github.com/devilbox/).

Even just bug fix releases are time-consuming as you want to have regression tests for them to save time in the future.

Feature requests take even longer and I need to make sure that they are fully backwards compatible and that every new feature has been documented properly in readthedocs: devilbox.readthedocs.io/.

For anything that requires manual steps after a new release, I have an updating document that describes each step in detail: github.com/cytopia/devilbox/blob/master/UPDATING.md

Is Devilbox intended to eventually be monetized if it isn’t monetized already?

I don’t have any plans to monetize it. That would most likely discourage new people from using it and I personally find it a great tool, especially for “young” developers. Besides, it is based completely on other free open-source software. I also use it myself - not as often anymore as a few years back, but still enough to keep it up, and I wouldn’t like to put the pressure on me to act on requests people have paid for. It is still a hobby project and I enjoy working on it.

From time to time I do receive some requests from people offering to pay to implement feature X or Y, but that’s not something I want or can keep up, as I still have a full-time job aside.

Nonetheless, I do have a GitHub sponsor button attached to that project and would love if people donated to it.

If you plan to continue developing Devilbox, where do you see the project heading next?

I have endless ideas, but unfortunately time is a limiting factor. The most urgent things I will have to do is to minimize code and make everything smaller, while keeping all edge-cases covered. Docker images have grown quite a lot and need a redesign, or, more specifically, I will have to create more flavors allowing for small, medium, and big images that a user can pick from.

Another urgent major feature I have to tackle is the performance on MacOS, which is still an issue in 2021. This is not a problem with Devilbox per se, but rather with MacOS’s implementation of Docker. There are various solutions out there to overcome this, but they require some heavy lifting on my end (github.com/cytopia/devilbox/issues/105).

Also the AutoDNS feature is something that requires a major rewrite, which I’m currently working on. Once in place, it will allow me to remove all kinds of port-forwards from the PHP container, which will play nicely with minimizing code (github.com/cytopia/devilbox/issues/248).

Another feature that is currently being worked on is the separation of the Devilbox intranet from the main project. This would allow the community to create different frontends or even submit changes more easily. The frontend part has already been done by GitHub user hurrtz and is available in the develop branch here: github.com/devilbox/web-ui/tree/develop. What is left to do on my end is to create the API endpoints in the Devilbox repository itself.

Like what you saw here? Why not share it?

Or, better yet, share Console!

Also, don’t forget to subscribe to get a list of new open-source projects curated by an Amazon software engineer directly in your email every week.